The Spring Framework - Reference Documentation - Spring Framework reference 2.0.5 参考手册英文版

2.0.5

- Preface

- 1. Introduction

- 2. What's new in Spring 2.0?

- I. Core Technologies

- 3. The IoC container

- 3.1. Introduction

- 3.2. Basics - containers and beans

- 3.3. Dependencies

- 3.4. Bean scopes

- 3.5. Customizing the nature of a bean

- 3.6. Bean definition inheritance

- 3.7. Container extension points

- 3.8. The

ApplicationContext - 3.9. Glue code and the evil singleton

- 4. Resources

- 4.1. Introduction

- 4.2. The

Resourceinterface - 4.3. Built-in

Resourceimplementations - 4.4. The

ResourceLoader - 4.5. The

ResourceLoaderAwareinterface - 4.6.

Resourcesas dependencies - 4.7. Application contexts and

Resourcepaths

- 5. Validation, Data-binding, the

BeanWrapper, andPropertyEditors - 6. Aspect Oriented Programming with Spring

- 6.1. Introduction

- 6.2. @AspectJ support

- 6.3. Schema-based AOP support

- 6.4. Choosing which AOP declaration style to use

- 6.5. Mixing aspect types

- 6.6. Proxying mechanisms

- 6.7. Programmatic creation of @AspectJ Proxies

- 6.8. Using AspectJ with Spring applications

- 6.9. Further Resources

- 7. Spring AOP APIs

- 7.1. Introduction

- 7.2. Pointcut API in Spring

- 7.3. Advice API in Spring

- 7.4. Advisor API in Spring

- 7.5. Using the ProxyFactoryBean to create AOP proxies

- 7.6. Concise proxy definitions

- 7.7. Creating AOP proxies programmatically with the ProxyFactory

- 7.8. Manipulating advised objects

- 7.9. Using the "autoproxy" facility

- 7.10. Using TargetSources

- 7.11. Defining new

Advicetypes - 7.12. Further resources

- 8. Testing

- II. Middle Tier Data Access

- 9. Transaction management

- 9.1. Introduction

- 9.2. Motivations

- 9.3. Key abstractions

- 9.4. Resource synchronization with transactions

- 9.5. Declarative transaction management

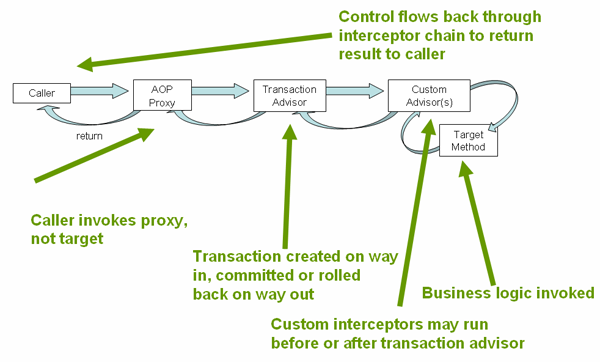

- 9.5.1. Understanding the Spring Framework's declarative transaction implementation

- 9.5.2. A first example

- 9.5.3. Rolling back

- 9.5.4. Configuring different transactional semantics for different beans

- 9.5.5.

<tx:advice/>settings - 9.5.6. Using

@Transactional - 9.5.7. Advising transactional operations

- 9.5.8. Using

@Transactionalwith AspectJ

- 9.6. Programmatic transaction management

- 9.7. Choosing between programmatic and declarative transaction management

- 9.8. Application server-specific integration

- 9.9. Solutions to common problems

- 9.10. Further Resources

- 10. DAO support

- 11. Data access using JDBC

- 12. Object Relational Mapping (ORM) data access

- 12.1. Introduction

- 12.2. Hibernate

- 12.2.1. Resource management

- 12.2.2.

SessionFactorysetup in a Spring container - 12.2.3. The

HibernateTemplate - 12.2.4. Implementing Spring-based DAOs without callbacks

- 12.2.5. Implementing DAOs based on plain Hibernate3 API

- 12.2.6. Programmatic transaction demarcation

- 12.2.7. Declarative transaction demarcation

- 12.2.8. Transaction management strategies

- 12.2.9. Container resources versus local resources

- 12.2.10. Spurious application server warnings when using Hibernate

- 12.3. JDO

- 12.4. Oracle TopLink

- 12.5. iBATIS SQL Maps

- 12.6. JPA

- 12.7. Transaction Management

- 12.8.

JpaDialect

- III. The Web

- 13. Web MVC framework

- 13.1. Introduction

- 13.2. The

DispatcherServlet - 13.3. Controllers

- 13.4. Handler mappings

- 13.5. Views and resolving them

- 13.6. Using locales

- 13.7. Using themes

- 13.8. Spring's multipart (fileupload) support

- 13.9. Using Spring's form tag library

- 13.10. Handling exceptions

- 13.11. Convention over configuration

- 13.12. Further Resources

- 14. Integrating view technologies

- 14.1. Introduction

- 14.2. JSP & JSTL

- 14.3. Tiles

- 14.4. Velocity & FreeMarker

- 14.5. XSLT

- 14.6. Document views (PDF/Excel)

- 14.7. JasperReports

- 15. Integrating with other web frameworks

- 16. Portlet MVC Framework

- IV. Integration

- 17. Remoting and web services using Spring

- 18. Enterprise Java Bean (EJB) integration

- 19. JMS

- 20. JMX

- 20.1. Introduction

- 20.2. Exporting your beans to JMX

- 20.3. Controlling the management interface of your beans

- 20.3.1. The

MBeanInfoAssemblerInterface - 20.3.2. Using source-Level metadata

- 20.3.3. Using JDK 5.0 Annotations

- 20.3.4. Source-Level Metadata Types

- 20.3.5. The

AutodetectCapableMBeanInfoAssemblerinterface - 20.3.6. Defining Management interfaces using Java interfaces

- 20.3.7. Using

MethodNameBasedMBeanInfoAssembler

- 20.3.1. The

- 20.4. Controlling the

ObjectNames for your beans - 20.5. JSR-160 Connectors

- 20.6. Accessing MBeans via Proxies

- 20.7. Notifications

- 20.8. Further Resources

- 21. JCA CCI

- 22. Email

- 23. Scheduling and Thread Pooling

- 24. Dynamic language support

- 25. Annotations and Source Level Metadata Support

- V. Sample applications

- A. XML Schema-based configuration

- A.1. Introduction

- A.2. XML Schema-based configuration

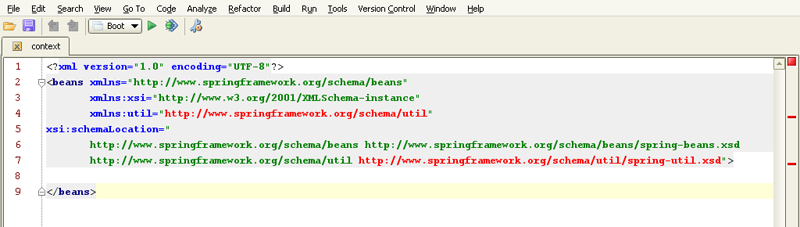

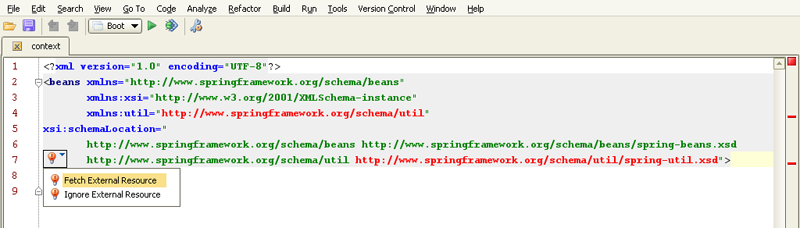

- A.2.1. Referencing the schemas

- A.2.2. The

utilschema - A.2.3. The

jeeschema - A.2.3.1.

<jee:jndi-lookup/>(simple) - A.2.3.2.

<jee:jndi-lookup/>(with single JNDI environment setting) - A.2.3.3.

<jee:jndi-lookup/>(with multiple JNDI environment settings) - A.2.3.4.

<jee:jndi-lookup/>(complex) - A.2.3.5.

<jee:local-slsb/>(simple) - A.2.3.6.

<jee:local-slsb/>(complex) - A.2.3.7.

<jee:remote-slsb/>

- A.2.3.1.

- A.2.4. The

langschema - A.2.5. The

tx(transaction) schema - A.2.6. The

aopschema - A.2.7. The

toolschema - A.2.8. The

beansschema

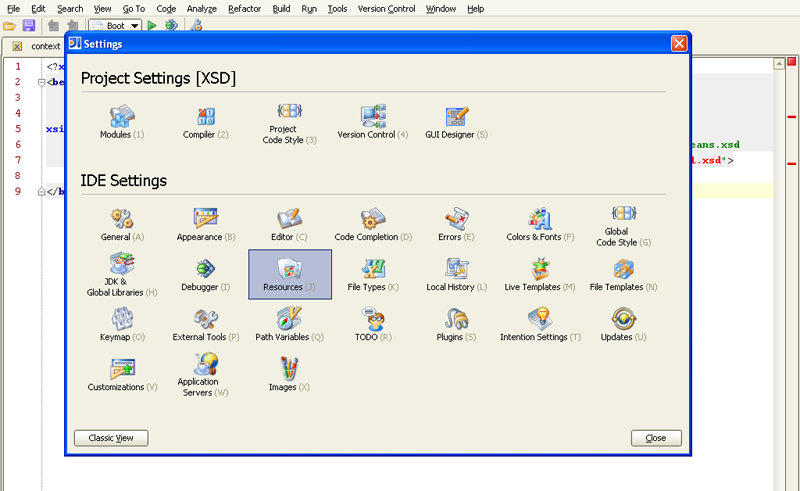

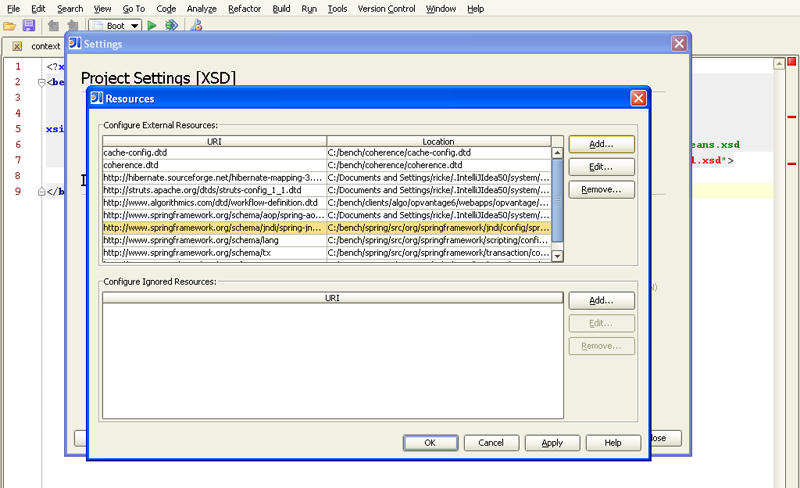

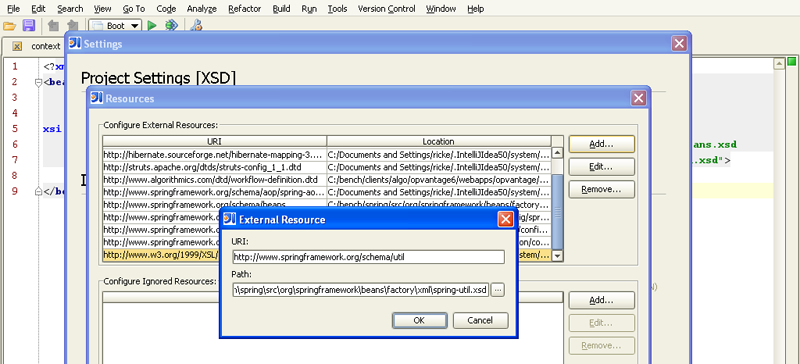

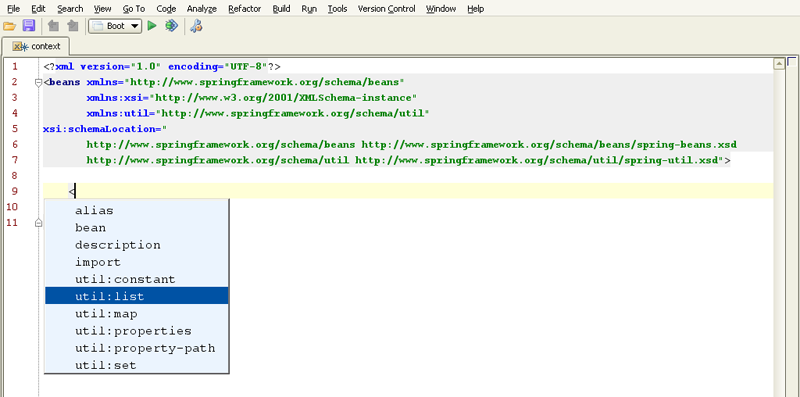

- A.3. Setting up your IDE

- B. Extensible XML authoring

- C.

spring-beans-2.0.dtd - D. spring.tld

- E. spring-form.tld

Developing software applications is hard enough even with good tools and technologies. Implementing applications using platforms which promise everything but turn out to be heavy-weight, hard to control and not very efficient during the development cycle makes it even harder. Spring provides a light-weight solution for building enterprise-ready applications, while still supporting the possibility of using declarative transaction management, remote access to your logic using RMI or web services, and various options for persisting your data to a database. Spring provides a full-featured MVC framework, and transparent ways of integrating AOP into your software.

Spring could potentially be a one-stop-shop for all your enterprise applications; however, Spring is modular, allowing you to use just those parts of it that you need, without having to bring in the rest. You can use the IoC container, with Struts on top, but you could also choose to use just the Hibernate integration code or the JDBC abstraction layer. Spring has been (and continues to be) designed to be non-intrusive, meaning dependencies on the framework itself are generally none (or absolutely minimal, depending on the area of use).

This document provides a reference guide to Spring's features. Since this document is still to be considered very much work-in-progress, if you have any requests or comments, please post them on the user mailing list or on the support forums at http://forum.springframework.org/.

Before we go on, a few words of gratitude are due to Christian Bauer (of the Hibernate team), who prepared and adapted the DocBook-XSL software in order to be able to create Hibernate's reference guide, thus also allowing us to create this one. Also thanks to Russell Healy for doing an extensive and valuable review of some of the material.

Java applications (a loose term which runs the gamut from constrained applets to full-fledged n-tier server-side enterprise applications) typically are composed of a number of objects that collaborate with one another to form the application proper. The objects in an application can thus be said to have dependencies between themselves.

The Java language and platform provides a wealth of functionality for architecting and building applications, ranging all the way from the very basic building blocks of primitive types and classes (and the means to define new classes), to rich full-featured application servers and web frameworks. One area that is decidedly conspicuous by its absence is any means of taking the basic building blocks and composing them into a coherent whole; this area has typically been left to the purvey of the architects and developers tasked with building an application (or applications). Now to be fair, there are a number of design patterns devoted to the business of composing the various classes and object instances that makeup an all-singing, all-dancing application. Design patterns such as Factory, Abstract Factory, Builder, Decorator, and Service Locator (to name but a few) have widespread recognition and acceptance within the software development industry (presumably that is why these patterns have been formalized as patterns in the first place). This is all very well, but these patterns are just that: best practices given a name, typically together with a description of what the pattern does, where the pattern is typically best applied, the problems that the application of the pattern addresses, and so forth. Notice that the last paragraph used the phrase “... a description of what the pattern does...”; pattern books and wikis are typically listings of such formalized best practice that you can certainly take away, mull over, and then implement yourself in your application.

The IoC component of the Spring Framework addresses the enterprise concern of taking the classes, objects, and services that are to compose an application, by providing a formalized means of composing these various disparate components into a fully working application ready for use. The Spring Framework takes best practices that have been proven over the years in numerous applications and formalized as design patterns, and actually codifies these patterns as first class objects that you as an architect and developer can take away and integrate into your own application(s). This is a Very Good Thing Indeed as attested to by the numerous organizations and institutions that have used the Spring Framework to engineer robust, maintainable applications.

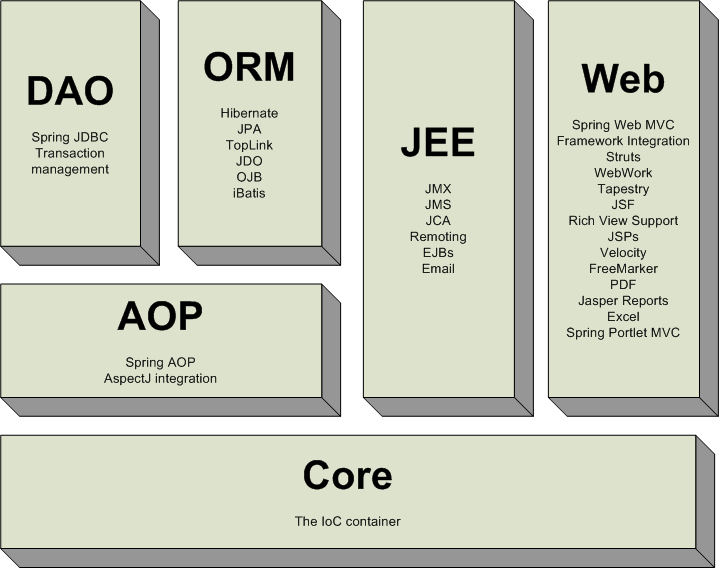

The Spring Framework contains a lot of features, which are well-organized in seven modules shown in the diagram below. This chapter discusses each of the modules in turn.

Overview of the Spring Framework

The Core package

is the most fundamental part of the framework and provides the IoC and Dependency

Injection features. The basic concept here is the BeanFactory,

which provides a sophisticated implementation of the factory pattern which removes

the need for programmatic singletons and allows you to decouple the configuration and

specification of dependencies from your actual program logic.

The Context package build on the solid base provided by the Core package: it provides a way to access objects in a framework-style manner in a fashion somewhat reminiscent of a JNDI-registry. The context package inherits its features from the beans package and adds support for internationalization (I18N) (using for example resource bundles), event-propagation, resource-loading, and the transparent creation of contexts by, for example, a servlet container.

The DAO package provides a JDBC-abstraction layer that removes the need to do tedious JDBC coding and parsing of database-vendor specific error codes. Also, the JDBC package provides a way to do programmatic as well as declarative transaction management, not only for classes implementing special interfaces, but for all your POJOs (plain old Java objects).

The ORM package provides integration layers for popular object-relational mapping APIs, including JPA, JDO, Hibernate, and iBatis. Using the ORM package you can use all those O/R-mappers in combination with all the other features Spring offers, such as the simple declarative transaction management feature mentioned previously.

Spring's AOP package provides an AOP Alliance-compliant aspect-oriented programming implementation allowing you to define, for example, method-interceptors and pointcuts to cleanly decouple code implementing functionality that should logically speaking be separated. Using source-level metadata functionality you can also incorporate all kinds of behavioral information into your code, in a manner similar to that of .NET attributes.

Spring's Web package provides basic web-oriented integration features, such as multipart file-upload functionality, the initialization of the IoC container using servlet listeners and a web-oriented application context. When using Spring together with WebWork or Struts, this is the package to integrate with.

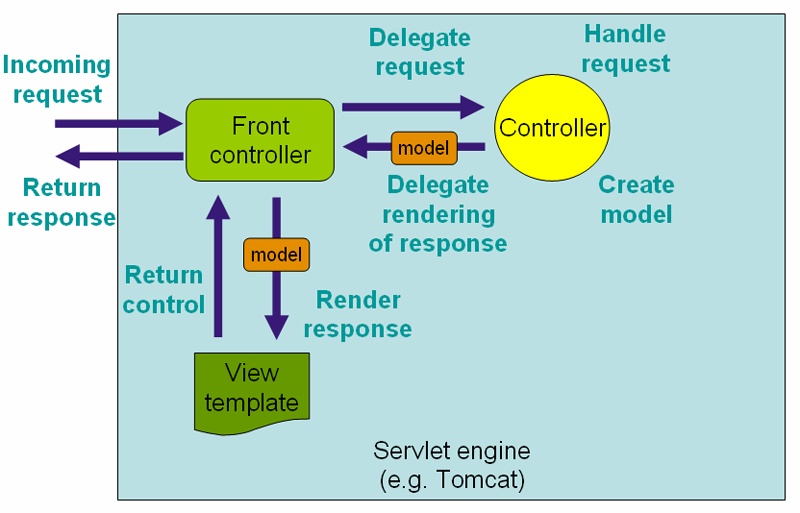

Spring's MVC package provides a Model-View-Controller (MVC) implementation for web-applications. Spring's MVC framework is not just any old implementation; it provides a clean separation between domain model code and web forms, and allows you to use all the other features of the Spring Framework.

With the building blocks described above you can use Spring in all sorts of scenarios, from applets up to fully-fledged enterprise applications using Spring's transaction management functionality and web framework integration.

Typical full-fledged Spring web application

By using Spring's

declarative transaction management features

the web application is fully transactional, just as it would be when using container

managed transactions as provided by Enterprise JavaBeans. All your custom business logic

can be implemented using simple POJOs, managed by Spring's IoC container. Additional

services include support for sending email, and validation that is independent of the

web layer enabling you to choose where to execute validation rules. Spring's ORM

support is integrated with JPA, Hibernate, JDO and iBatis; for example, when using

Hibernate, you can continue to use your existing mapping files and standard Hibernate

SessionFactory configuration. Form controllers seamlessly

integrate the web-layer with the domain model, removing the need for

ActionForms or other classes that transform HTTP parameters to

values for your domain model.

Spring middle-tier using a third-party web framework

Sometimes the current circumstances do not allow you to completely switch

to a different framework. The Spring Framework does not force

you to use everything within it; it is not an all-or-nothing

solution. Existing front-ends built using WebWork, Struts, Tapestry, or other UI

frameworks can be integrated perfectly well with a Spring-based middle-tier,

allowing you to use the transaction features that Spring offers. The only thing

you need to do is wire up your business logic using an

ApplicationContext and integrate your web layer using a

WebApplicationContext.

Remoting usage scenario

When you need to access existing code via web services, you can use Spring's

Hessian-, Burlap-, Rmi-

or JaxRpcProxyFactory classes. Enabling remote access to

existing applications suddenly is not that hard anymore.

EJBs - Wrapping existing POJOs

The Spring Framework also provides an access- and abstraction- layer for Enterprise JavaBeans, enabling you to reuse your existing POJOs and wrap them in Stateless Session Beans, for use in scalable, failsafe web applications that might need declarative security.

If you have been using the Spring Framework for some time, you will be aware that Spring has just undergone a major revision.

This revision includes a host of new features, and a lot of the existing functionality has been reviewed and improved. In fact, so much of Spring is shiny and improved that the Spring development team decided that the next release of Spring merited an increment of the version number; and so Spring 2.0 was announced in December 2005 at the Spring Experience conference in Florida.

This chapter is a guide to the new and improved features of Spring 2.0. It is intended to provide a high-level summary so that seasoned Spring architects and developers can become immediately familiar with the new Spring 2.0 functionality. For more in-depth information on the features, please refer to the corresponding sections hyperlinked from within this chapter.

Some of the new and improved functionality described below has been (or will be) backported into the Spring 1.2.x release line. Please do consult the changelogs for the 1.2.x releases to see if a feature has been backported.

One of the areas that contains a considerable number of 2.0 improvements is Spring's IoC container.

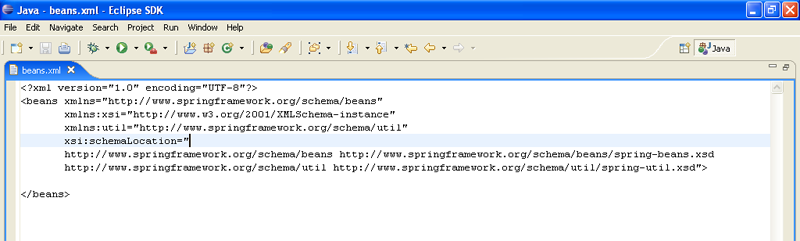

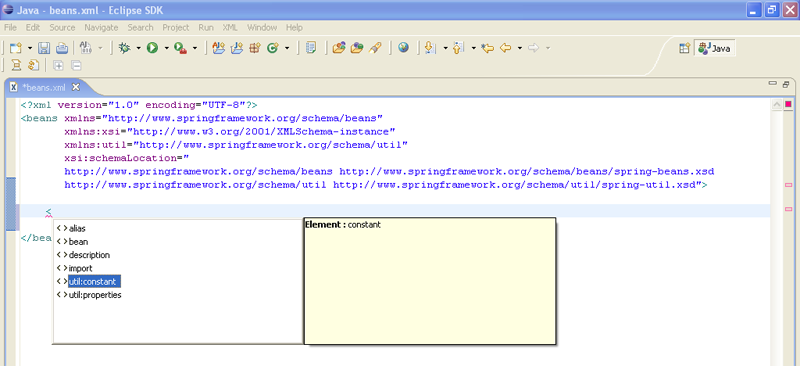

Spring XML configuration is now even easier, thanks to the advent of the new XML configuration syntax based on XML Schema. If you want to take advantage of the new tags that Spring provides (and the Spring team certainly suggest that you do because they make configuration less verbose and easier to read), then do read the section entitled Appendix A, XML Schema-based configuration.

On a related note, there is a new, updated DTD for Spring 2.0 that

you may wish to reference if you cannot take advantage of the XML Schema-based

configuration. The DOCTYPE declaration is included below for your convenience,

but the interested reader should definitely read the

'spring-beans-2.0.dtd' DTD included in the

'dist/resources' directory of the Spring

2.0 distribution.

<!DOCTYPE beans PUBLIC "-//SPRING//DTD BEAN 2.0//EN" "http://www.springframework.org/dtd/spring-beans-2.0.dtd">

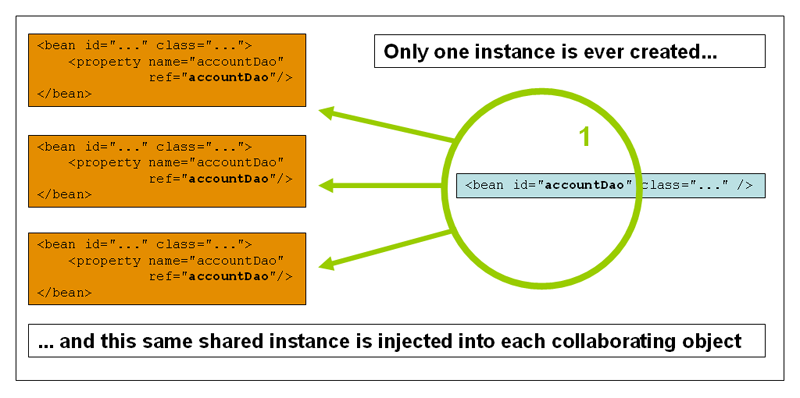

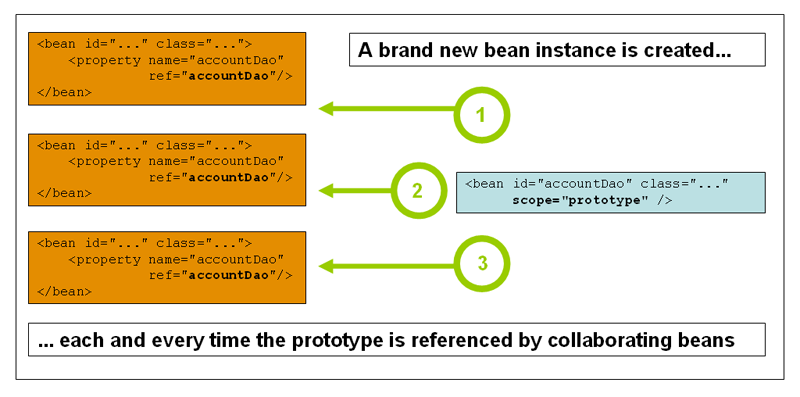

Previous versions of Spring had IoC container level support for exactly two distinct bean scopes (singleton and prototype). Spring 2.0 improves on this by not only providing a number of additional scopes depending on the environment in which Spring is being deployed (for example, request and session scoped beans in a web environment), but also by providing integration points so that Spring users can create their own scopes.

It should be noted that although the underlying (and internal) implementation for singleton- and prototype-scoped beans has been changed, this change is totally transparent to the end user... no existing configuration needs to change, and no existing configuration will break.

Both the new and the original scopes are detailed in the section entitled Section 3.4, “Bean scopes”.

Not only is XML configuration easier to write, it is now also extensible.

What 'extensible' means in this context is that you, as an application developer, or (more likely) as a third party framework or product vendor, can write custom tags that other developers can then plug into their own Spring configuration files. This allows you to have your own domain specific language (the term is used loosely here) of sorts be reflected in the specific configuration of your own components.

Implementing custom Spring tags may not be of interest to every single application developer or enterprise architect using Spring in their own projects. We expect third-party vendors to be highly interested in developing custom configuration tags for use in Spring configuration files.

The extensible configuration mechanism is documented in Appendix B, Extensible XML authoring.

Spring 2.0 has a much improved AOP offering. The Spring AOP framework itself is markedly easier to configure in XML, and significantly less verbose as a result; and Spring 2.0 integrates with the AspectJ pointcut language and @AspectJ aspect declaration style. The chapter entitled Chapter 6, Aspect Oriented Programming with Spring is dedicated to describing this new support.

Spring 2.0 introduces new schema support for defining aspects backed by

regular Java objects. This support takes advantage of the AspectJ pointcut

language and offers fully typed advice (i.e. no more casting and

Object[] argument manipulation). Details of this support

can be found in the section entitled Section 6.3, “Schema-based AOP support”.

Spring 2.0 also supports aspects defined using the @AspectJ annotations. These aspects can be shared between AspectJ and Spring AOP, and require (honestly!) only some simple configuration. Said support for @AspectJ aspects is discussed in Section 6.2, “@AspectJ support”.

The way that transactions are configured in Spring 2.0 has been changed significantly. The previous 1.2.x style of configuration continues to be valid (and supported), but the new style is markedly less verbose and is the recommended style. Spring 2.0 also ships with an AspectJ aspects library that you can use to make pretty much any object transactional - even objects not created by the Spring IoC container.

The chapter entitled Chapter 9, Transaction management contains all of the details.

Spring 2.0 ships with a JPA abstraction layer that is similar in intent to Spring's JDBC abstraction layer in terms of scope and general usage patterns.

If you are interested in using a JPA-implementation as the backbone of your persistence layer, the section entitled Section 12.6, “JPA” is dedicated to detailing Spring's support and value-add in this area.

Prior to Spring 2.0, Spring's JMS offering was limited to

sending messages and the synchronous receiving of

messages. This functionality (encapsulated in the

JmsTemplate class) is great, but it doesn't

address the requirement for the asynchronous

receiving of messages.

Spring 2.0 now ships with full support for the reception of messages in an asynchronous fashion, as detailed in the section entitled Section 19.4.2, “Asynchronous Reception - Message-Driven POJOs”.

There are some small (but nevertheless notable) new classes in the

Spring Framework's JDBC support library. The first,

NamedParameterJdbcTemplate,

provides support for programming JDBC statements using named parameters (as

opposed to programming JDBC statements using only classic placeholder

('?') arguments.

Another of the new classes, the

SimpleJdbcTemplate,

is aimed at making using the JdbcTemplate even easier to use when

you are developing against Java 5+ (Tiger).

The web tier support has been substantially improved and expanded in Spring 2.0.

A rich JSP tag library for Spring MVC was the JIRA issue that garnered the most votes from Spring users (by a wide margin).

Spring 2.0 ships with a full featured JSP tag library that makes the job of authoring JSP pages much easier when using Spring MVC; the Spring team is confident it will satisfy all of those developers who voted for the issue on JIRA. The new tag library is itself covered in the section entitled Section 13.9, “Using Spring's form tag library”, and a quick reference to all of the new tags can be found in the appendix entitled Appendix E, spring-form.tld.

For a lot of projects, sticking to established conventions and

having reasonable defaults is just what the projects need...

this theme of convention-over-configuration now has explicit support in

Spring MVC. What this means is that if you establish a set of naming

conventions for your Controllers and views, you can

substantially cut down on the amount of XML configuration

that is required to setup handler mappings, view resolvers,

ModelAndView instances, etc. This is a great boon

with regards to rapid prototyping, and can also lend a degree of (always

good-to-have) consistency across a codebase.

Spring MVC's convention-over-configuration support is detailed in the section entitled Section 13.11, “Convention over configuration”

Spring 2.0 ships with a Portlet framework that is conceptually similar to the Spring MVC framework. Detailed coverage of the Spring Portlet framework can be found in the section entitled Chapter 16, Portlet MVC Framework.

This final section outlines all of the other new and improved Spring 2.0 features and functionality.

Spring 2.0 now has support for beans written in languages other than Java, with the currently supported dynamic languages being JRuby, Groovy and BeanShell. This dynamic language support is comprehensively detailed in the section entitled Chapter 24, Dynamic language support.

The Spring Framework now has support for Notifications;

it is also possible to exercise declarative control over the registration

behavior of MBeans with an MBeanServer.

Spring 2.0 offers an abstraction around the scheduling of tasks.

For the interested developer, the section entitled Section 23.4, “The Spring TaskExecutor abstraction”

contains all of the details.

Find below pointers to documentation describing some of the new Java 5 support in Spring 2.0.

This final section details issues that may arise during any migration from Spring 1.2.x to Spring 2.0. Feel free to take this next statement with a pinch of salt, but upgrading to Spring 2.0 from a Spring 1.2 application should simply be a matter of dropping the Spring 2.0 jar into the appropriate location in your application's directory structure.

The keyword from the last sentence was of course the “should”. Whether the upgrade is seamless or not depends on how much of the Spring APIs you are using in your code. Spring 2.0 removed pretty much all of the classes and methods previously marked as deprecated in the Spring 1.2.x codebase, so if you have been using such classes and methods, you will of course have to use alternative classes and methods (some of which are summarized below).

With regards to configuration, Spring 1.2.x style XML configuration is 100%, satisfaction-guaranteed compatible with the Spring 2.0 library. Of course if you are still using the Spring 1.2.x DTD, then you won't be able to take advantage of some of the new Spring 2.0 functionality (such as scopes and easier AOP and transaction configuration), but nothing will blow up.

The suggested migration strategy is to drop in the Spring 2.0 jar(s) to benefit from the improved code present in the release (bug fixes, optimizations, etc.). You can then, on an incremental basis, choose to start using the new Spring 2.0 features and configuration. For example, you could choose to start configuring just your aspects in the new Spring 2.0 style; it is perfectly valid to have 90% of your configuration using the old-school Spring 1.2.x configuration (which references the 1.2.x DTD), and have the other 10% using the new Spring 2.0 configuration (which references the 2.0 DTD or XSD). Bear in mind that you are not forced to upgrade your XML configuration should you choose to drop in the Spring 2.0 libraries.

For a comprehensive list of changes, consult the 'changelog.txt'

file that is located in the top level directory of the Spring Framework 2.0 distribution.

The packaging of the Spring Framework jars has changed quite substantially

between the 1.2.x and 2.0 releases. In particular, there are now dedicated jars for the

JDO, Hibernate 2/3, TopLink ORM integration classes: they are no longer bundled in the

core 'spring.jar' file anymore.

Spring 2.0 ships with XSDs that describe Spring's XML metadata format in a much richer fashion than the DTD that shipped with previous versions. The old DTD is still fully supported, but if possible you are encouraged to reference the XSD files at the top of your bean definition files.

One thing that has changed in a (somewhat) breaking fashion is the way that

bean scopes are defined. If you are using the Spring 1.2 DTD you can continue to use

the 'singleton' attribute. You can however choose to

reference the new Spring 2.0 DTD

which does not permit the use of the 'singleton' attribute, but

rather uses the 'scope' attribute to define the bean lifecycle scope.

A number of classes and methods that previously were marked as

@deprecated have been removed from the Spring 2.0 codebase.

The Spring team decided that the 2.0 release marked a fresh start of sorts, and that any

deprecated 'cruft' was better excised now instead of continuing to haunt the codebase for

the foreseeable future.

As mentioned previously, for a comprehensive list of changes, consult the

'changelog.txt' file that is located in the top level directory of

the Spring Framework 2.0 distribution.

The following classes/interfaces have been removed from the Spring 2.0 codebase:

ResultReader: Use theRowMapperinterface instead.BeanFactoryBootstrap: Consider using aBeanFactoryLocatoror a custom bootstrap class instead.

Please note that support for Apache OJB was totally removed from the main Spring source tree; the Apache OJB integration library is still available, but can be found in it's new home in the Spring Modules project.

Please note that support for iBATIS SQL Maps 1.3 has been removed. If you haven't done so already, upgrade to iBATIS SQL Maps 2.0/2.1.

The view name that is determined by the UrlFilenameViewController

now takes into account the nested path of the request. This is a breaking change from

the original contract of the UrlFilenameViewController, and means

that if you are upgrading to Spring 2.0 from Spring 1.x and you are using this

class you might have to change your Spring Web MVC

configuration slightly. Refer to the class level Javadocs of the UrlFilenameViewController

to see examples of the new contract for view name determination.

A number of the sample applications have also been updated to showcase the new and

improved features of Spring 2.0, so do take the time to investigate them. The aforementioned

sample applications can be found in the 'samples'

directory of the full Spring distribution

('spring-with-dependencies.[zip|tar.gz]'), and are documented

(in part) in the chapter entitled Chapter 26, Showcase applications.

The Spring reference documentation has also substantially been updated to reflect all of the above features new in Spring 2.0. While every effort has been made to ensure that there are no errors in this documentation, some errors may nevertheless have crept in. If you do spot any typos or even more serious errors, and you can spare a few cycles during lunch, please do bring the error to the attention of the Spring team by raising an issue.

Special thanks to Arthur Loder for his tireless proofreading of the Spring Framework reference documentation and Javadocs.

This initial part of the reference documentation covers all of those technologies that are absolutely integral to the Spring Framework.

Foremost amongst these is the Spring Framework's Inversion of Control (IoC) container. A thorough treatment of the Spring Framework's IoC container is closely followed by comprehensive coverage of Spring's Aspect-Oriented Programming (AOP) technologies. The Spring Framework has its own AOP framework, which is conceptually easy to understand, and which successfully addresses the 80% sweet spot of AOP requirements in Java enterprise programming.

Coverage of Spring's integration with AspectJ (currently the richest - in terms of features - and certainly most mature AOP implementation in the Java enterprise space) is also provided.

Finally, the adoption of the test-driven-development (TDD) approach to software development is certainly advocated by the Spring team, and so coverage of Spring's support for integration testing is covered (alongside best practices for unit testing). The Spring team have found that the correct use of IoC certainly does make both unit and integration testing easier (in that the presence of setter methods and appropriate constructors on classes makes them easier to wire together on a test without having to set up service locator registries and suchlike)... the chapter dedicated solely to testing will hopefully convince you of this as well.

This chapter covers the Spring Framework's implementation of the Inversion of Control (IoC) [1] principle.

The org.springframework.beans and

org.springframework.context packages provide the basis

for the Spring Framework's IoC container. The

BeanFactory

interface provides an advanced configuration mechanism capable of managing

objects of any nature. The

ApplicationContext

interface builds on top of the BeanFactory

(it is a sub-interface) and adds other functionality such as easier integration

with Spring's AOP features, message resource handling (for use in

internationalization), event propagation, and application-layer specific contexts

such as the WebApplicationContext for use in web

applications.

In short, the BeanFactory provides the

configuration framework and basic functionality, while the

ApplicationContext adds more enterprise-centric

functionality to it. The ApplicationContext is a

complete superset of the BeanFactory, and any

description of BeanFactory capabilities and

behavior is to be considered to apply to the

ApplicationContext as well.

This chapter is divided into two parts, with the

first part covering the basic principles

that apply to both the BeanFactory and

ApplicationContext, and with the

second part covering those features

that apply only to the ApplicationContext interface.

In Spring, those objects that form the backbone of your application and that are managed by the Spring IoC container are referred to as beans. A bean is simply an object that is instantiated, assembled and otherwise managed by a Spring IoC container; other than that, there is nothing special about a bean (it is in all other respects one of probably many objects in your application). These beans, and the dependencies between them, are reflected in the configuration metadata used by a container.

The org.springframework.beans.factory.BeanFactory

is the actual representation of the Spring IoC container that is

responsible for containing and otherwise managing the aforementioned beans.

The BeanFactory interface is the central IoC container

interface in Spring. Its responsibilities include instantiating or sourcing application

objects, configuring such objects, and assembling the dependencies between these objects.

There are a number of implementations of the BeanFactory

interface that come supplied straight out-of-the-box with Spring. The most commonly used

BeanFactory implementation is the

XmlBeanFactory class. This implementation allows you to express the

objects that compose your application, and the doubtless rich interdependencies between such

objects, in terms of XML. The XmlBeanFactory takes this

XML configuration metadata and uses it to create a

fully configured system or application.

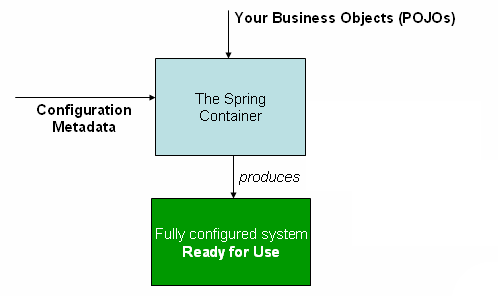

The Spring IoC container

As can be seen in the above image, the Spring IoC container consumes some form of configuration metadata; this configuration metadata is nothing more than how you (as an application developer) inform the Spring container as to how to “instantiate, configure, and assemble [the objects in your application]”. This configuration metadata is typically supplied in a simple and intuitive XML format. When using XML-based configuration metadata, you write bean definitions for those beans that you want the Spring IoC container to manage, and then let the container do it's stuff.

![[Note]](../images/admons/note.png) | Note |

|---|---|

XML-based metadata is by far the most commonly used form of configuration metadata. It is not however the only form of configuration metadata that is allowed. The Spring IoC container itself is totally decoupled from the format in which this configuration metadata is actually written. At the time of writing, you can supply this configuration metadata using either XML, the Java properties format, or programmatically (using Spring's public API). The XML-based configuration metadata format really is simple though, and so the remainder of this chapter will use the XML format to convey key concepts and features of the Spring IoC container. |

Please be advised that in the vast majority of application scenarios,

explicit user code is not required to instantiate one or more instances

of a Spring IoC container. For example, in a web application scenario, a simple

eight (or so) lines of absolutely boilerplate J2EE web descriptor XML in the

web.xml file of the application will typically suffice

(see Section 3.8.4, “Convenient ApplicationContext instantiation for web applications”).

Spring configuration consists of at least one bean definition that the

container must manage, but typically there will be more than one bean definition.

When using XML-based configuration metadata, these beans are configured as

<bean/> elements inside a top-level <beans/>

element.

These bean definitions correspond to the actual objects that make up your

application. Typically you will have bean definitions for your service layer

objects, your data access objects (DAOs), presentation objects such as Struts

Action instances, infrastructure objects such as

Hibernate SessionFactory instances, JMS

Queue references, etc. (the possibilities are of

course endless, and are limited only by the scope and complexity of your application).

(Typically one does not configure fine-grained domain objects in the container.)

Find below an example of the basic structure of XML-based configuration metadata.

<?xml version="1.0" encoding="UTF-8"?>

<beans xmlns="http://www.springframework.org/schema/beans"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://www.springframework.org/schema/beans http://www.springframework.org/schema/beans/spring-beans-2.0.xsd">

<bean id="..." class="...">

<!-- collaborators and configuration for this bean go here -->

</bean>

<bean id="..." class="...">

<!-- collaborators and configuration for this bean go here -->

</bean>

<!-- more bean definitions go here... -->

</beans>Instantiating a Spring IoC container is easy; find below some examples of how to do just that:

Resource resource = new FileSystemResource("beans.xml");

BeanFactory factory = new XmlBeanFactory(resource);... or...

ClassPathResource resource = new ClassPathResource("beans.xml");

BeanFactory factory = new XmlBeanFactory(resource);... or...

ApplicationContext context = new ClassPathXmlApplicationContext(

new String[] {"applicationContext.xml", "applicationContext-part2.xml"});

// of course, an ApplicationContext is just a BeanFactory

BeanFactory factory = (BeanFactory) context;It can often be useful to split up container definitions into multiple

XML files. One way to then load an application context which is configured

from all these XML fragments is to use the application context constructor

which takes multiple Resource locations. With

a bean factory, a bean definition reader can be used multiple times to read

definitions from each file in turn.

Generally, the Spring team prefers the above approach, since it

keeps container configuration files unaware of the fact that they are

being combined with others. An alternate approach is to use one or more

occurrences of the <import/> element to load bean definitions

from another file (or files). Any <import/> elements must be

placed before <bean/> elements in the file doing the importing.

Let's look at a sample:

<beans>

<import resource="services.xml"/>

<import resource="resources/messageSource.xml"/>

<import resource="/resources/themeSource.xml"/>

<bean id="bean1" class="..."/>

<bean id="bean2" class="..."/>

</beans>In this example, external bean definitions are being loaded from 3

files, services.xml,

messageSource.xml, and

themeSource.xml. All location paths are considered

relative to the definition file doing the importing, so

services.xml in this case must be in the same directory

or classpath location as the file doing the importing, while

messageSource.xml and

themeSource.xml must be in a

resources location below the location of the importing

file. As you can see, a leading slash is actually ignored, but given that

these are considered relative paths, it is probably better form not to use

the slash at all.

The contents of the files being imported must be fully valid XML

bean definition files according to the Schema or DTD, including the

top level <beans/> element.

As mentioned previously, a Spring IoC container manages one or more

beans. These beans are created using the instructions

defined in the configuration metadata that has been supplied to the container

(typically in the form of XML <bean/> definitions).

Within the container itself, these bean definitions are represented as

BeanDefinition objects, which contain (among other

information) the following metadata:

a package-qualified class name: this is normally the actual implementation class of the bean being defined. However, if the bean is to be instantiated by invoking a

staticfactory method instead of using a normal constructor, this will actually be the class name of the factory class.bean behavioral configuration elements, which state how the bean should behave in the container (prototype or singleton, autowiring mode, initialization and destruction callbacks, and so forth).

constructor arguments and property values to set in the newly created bean. An example would be the number of connections to use in a bean that manages a connection pool (either specified as a property or as a constructor argument), or the pool size limit.

other beans which are needed for the bean to do its work, that is collaborators (also called dependencies).

The concepts listed above directly translate to a set of properties that each bean definition consists of. Some of these properties are listed below, along with a link to further documentation about each of them.

Table 3.1. The bean definition

| Feature | Explained in... |

|---|---|

| class | |

| name | |

| scope | |

| constructor arguments | |

| properties | |

| autowiring mode | |

| dependency checking mode | |

| lazy-initialization mode | |

| initialization method | |

| destruction method |

Besides bean definitions which contain information on how to

create a specific bean, certain BeanFactory

implementations also permit the registration of existing objects that have

been created outside the factory (by user code). The

DefaultListableBeanFactory class supports this

through the registerSingleton(..) method. Typical applications

solely work with beans defined through metadata bean definitions, though.

Every bean has one or more ids (also called identifiers, or names; these terms refer to the same thing). These ids must be unique within the container the bean is hosted in. A bean will almost always have only one id, but if a bean has more than one id, the extra ones can essentially be considered aliases.

When using XML-based configuration metadata, you use the 'id'

or 'name' attributes to specify the bean identifier(s). The

'id' attribute allows you to specify exactly one id, and as

it is a real XML element ID attribute, the XML parser is able to do

some extra validation when other elements reference the id; as such, it is the

preferred way to specify a bean id. However, the XML specification does limit

the characters which are legal in XML IDs. This is usually not a constraint, but

if you have a need to use one of these special XML characters, or want to introduce

other aliases to the bean, you may also or instead specify one or more bean ids,

separated by a comma (,), semicolon (;), or

whitespace in the 'name' attribute.

Please note that you are not required to supply a name for a bean. If no name is supplied explicitly, the container will generate a (unique) name for that bean. The motivations for not supplying a name for a bean will be discussed later (one use case is inner beans).

In a bean definition itself, you may supply more than one name for

the bean, by using a combination of up to one name specified via the

id attribute, and any number of other names via the

name attribute. All these names can be considered

equivalent aliases to the same bean, and are useful for some situations,

such as allowing each component used in an application to refer to a

common dependency using a bean name that is specific to that component

itself.

Having to specify all aliases when the bean is actually defined is not

always adequate however. It is sometimes desirable to introduce an alias

for a bean which is defined elsewhere. In XML-based configuration metadata

this may be accomplished via the use of the standalone

<alias/> element. For example:

<alias name="fromName" alias="toName"/>

In this case, a bean in the same container which is named

'fromName', may also after the use of this alias

definition, be referred to as 'toName'.

As a concrete example, consider the case where component A defines a DataSource bean called componentA-dataSource, in its XML fragment. Component B would however like to refer to the DataSource as componentB-dataSource in its XML fragment. And the main application, MyApp, defines its own XML fragment and assembles the final application context from all three fragments, and would like to refer to the DataSource as myApp-dataSource. This scenario can be easily handled by adding to the MyApp XML fragment the following standalone aliases:

<alias name="componentA-dataSource" alias="componentB-dataSource"/> <alias name="componentA-dataSource" alias="myApp-dataSource" />

Now each component and the main app can refer to the dataSource via a name that is unique and guaranteed not to clash with any other definition (effectively there is a namespace), yet they refer to the same bean.

A bean definition can be seen as a recipe for creating one or more actual objects. The container looks at the recipe for a named bean when asked, and uses the configuration metadata encapsulated by that bean definition to create (or acquire) an actual object.

If you are using XML-based configuration metadata, you can specify

the type (or class) of object that is to be instantiated using the

'class' attribute of the <bean/>

element. This 'class' attribute (which internally

eventually boils down to being a Class property on a

BeanDefinition instance) is normally

mandatory (see Section 3.2.3.2.3, “Instantiation using an instance factory method” and

Section 3.6, “Bean definition inheritance” for the two exceptions)

and is used for one of two purposes. The class property specifies the

class of the bean to be constructed in the much more common case where the

container itself directly creates the bean by calling its constructor

reflectively (somewhat equivalent to Java code using the

'new' operator). In the less common case where the

container invokes a static, factory

method on a class to create the bean, the class property specifies the actual

class containing the static factory method that is to

be invoked to create the object (the type of the object returned from the

invocation of the static factory method may be the same

class or another class entirely, it doesn't matter).

When creating a bean using the constructor approach, all normal classes are usable by and compatible with Spring. That is, the class being created does not need to implement any specific interfaces or be coded in a specific fashion. Just specifying the bean class should be enough. However, depending on what type of IoC you are going to use for that specific bean, you may need a default (empty) constructor.

Additionally, the Spring IoC container isn't limited to just managing true JavaBeans, it is also able to manage virtually any class you want it to manage. Most people using Spring prefer to have actual JavaBeans (having just a default (no-argument) constructor and appropriate setters and getters modeled after the properties) in the container, but it is also possible to have more exotic non-bean-style classes in your container. If, for example, you need to use a legacy connection pool that absolutely does not adhere to the JavaBean specification, Spring can manage it as well.

When using XML-based configuration metadata you can specify your bean class like so:

<bean id="exampleBean" class="examples.ExampleBean"/> <bean name="anotherExample" class="examples.ExampleBeanTwo"/>

The mechanism for supplying arguments to the constructor (if required), or setting properties of the object instance after it has been constructed, will be described shortly.

When defining a bean which is to be created using a static

factory method, along with the class attribute

which specifies the class containing the static factory method,

another attribute named factory-method is needed to

specify the name of the factory method itself. Spring expects to be

able to call this method (with an optional list of arguments as

described later) and get back a live object, which from that point on

is treated as if it had been created normally via a constructor. One

use for such a bean definition is to call static

factories in legacy code.

The following example shows a bean definition which specifies

that the bean is to be created by calling a factory-method. Note that

the definition does not specify the type (class) of the returned

object, only the class containing the factory method. In this example,

the createInstance() method must be a

static method.

<bean id="exampleBean"

class="examples.ExampleBean2"

factory-method="createInstance"/>The mechanism for supplying (optional) arguments to the factory method, or setting properties of the object instance after it has been returned from the factory, will be described shortly.

In a fashion similar to instantiation via a static factory method, instantiation using an instance factory method is where the factory method of an existing bean from the container is invoked to create the new bean.

To use this mechanism, the 'class' attribute

must be left empty, and the 'factory-bean' attribute

must specify the name of a bean in the current (or parent/ancestor) container

that contains the factory method. The factory method itself must still be set

via the 'factory-method' attribute (as seen in the example

below).

<!-- the factory bean, which contains a method called createInstance() -->

<bean id="myFactoryBean" class="...">

...

</bean>

<!-- the bean to be created via the factory bean -->

<bean id="exampleBean"

factory-bean="myFactoryBean"

factory-method="createInstance"/>Although the mechanisms for setting bean properties are still to be discussed, one implication of this approach is that the factory bean itself can be managed and configured via DI.

A BeanFactory is essentially nothing more

than the interface for an advanced factory capable of maintaining a registry

of different beans and their dependencies. The BeanFactory

enables you to read bean definitions and access them using the bean factory.

When using just the BeanFactory you would create

one and read in some bean definitions in the XML format as follows:

InputStream is = new FileInputStream("beans.xml");

BeanFactory factory = new XmlBeanFactory(is);Basically that's all there is to it. Using getBean(String)

you can retrieve instances of your beans; the client-side view of the

BeanFactory is surprisingly simple. The

BeanFactory interface has only six methods for

client code to call:

boolean containsBean(String): returns true if theBeanFactorycontains a bean definition or bean instance that matches the given nameObject getBean(String): returns an instance of the bean registered under the given name. Depending on how the bean was configured by theBeanFactoryconfiguration, either a singleton and thus shared instance or a newly created bean will be returned. ABeansExceptionwill be thrown when either the bean could not be found (in which case it'll be aNoSuchBeanDefinitionException), or an exception occurred while instantiating and preparing the beanObject getBean(String, Class): returns a bean, registered under the given name. The bean returned will be cast to the given Class. If the bean could not be cast, corresponding exceptions will be thrown (BeanNotOfRequiredTypeException). Furthermore, all rules of thegetBean(String)method apply (see above)Class getType(String name): returns theClassof the bean with the given name. If no bean corresponding to the given name could be found, aNoSuchBeanDefinitionExceptionwill be thrownboolean isSingleton(String): determines whether or not the bean definition or bean instance registered under the given name is a singleton (bean scopes such as singleton are explained later). If no bean corresponding to the given name could be found, aNoSuchBeanDefinitionExceptionwill be thrownString[] getAliases(String): Return the aliases for the given bean name, if any were defined in the bean definition

Your typical enterprise application is not made up of a single object (or bean in the Spring parlance). Even the simplest of applications will no doubt have at least a handful of objects that work together to present what the end-user sees as a coherent application. This next section explains how you go from defining a number of bean definitions that stand-alone, each to themselves, to a fully realized application where objects work (or collaborate) together to achieve some goal (usually an application that does what the end-user wants).

The basic principle behind Dependency Injection (DI) is that objects define their dependencies (that is to say the other objects they work with) only through constructor arguments, arguments to a factory method, or properties which are set on the object instance after it has been constructed or returned from a factory method. Then, it is the job of the container to actually inject those dependencies when it creates the bean. This is fundamentally the inverse, hence the name Inversion of Control (IoC), of the bean itself being in control of instantiating or locating its dependencies on its own using direct construction of classes, or something like the Service Locator pattern.

It becomes evident upon usage that code gets much cleaner when the DI principle is applied, and reaching a higher grade of decoupling is much easier when beans do not look up their dependencies, but are provided with them (and additionally do not even know where the dependencies are located and of what actual class they are).

As touched on in the previous paragraph, DI exists in two major variants, namely Setter Injection, and Constructor Injection.

Setter-based DI is realized by calling setter methods

on your beans after invoking a no-argument constructor or no-argument

static factory method to instantiate your bean.

Find below an example of a class that can only be dependency injected using pure setter injection. Note that there is nothing special about this class... it is plain old Java.

public class SimpleMovieLister {

// the SimpleMovieLister has a dependency on the MovieFinder

private MovieFinder movieFinder;

// a setter method so that the Spring container can 'inject' a MovieFinder

public void setMovieFinder(MovieFinder movieFinder) {

this.movieFinder = movieFinder;

}

// business logic that actually 'uses' the injected MovieFinder is omitted...

}

Constructor-based DI

is realized by invoking a constructor with a number of arguments,

each representing a collaborator. Additionally,

calling a static factory method with specific

arguments to construct the bean, can be considered almost equivalent,

and the rest of this text will consider arguments to a constructor and

arguments to a static factory method similarly.

Find below an example of a class that could only be dependency injected using constructor injection. Again, note that there is nothing special about this class.

public class SimpleMovieLister {

// the SimpleMovieLister has a dependency on the MovieFinder

private MovieFinder movieFinder;

// a constructor so that the Spring container can 'inject' a MovieFinder

public SimpleMovieLister(MovieFinder movieFinder) {

this.movieFinder = movieFinder;

}

// business logic that actually 'uses' the injected MovieFinder is omitted...

}

The BeanFactory supports both of these

variants for injecting dependencies into beans it manages. (It in fact

also supports injecting setter-based dependencies after some

dependencies have already been supplied via the constructor approach.)

The configuration for the dependencies comes in the form of a

BeanDefinition, which is used together with

PropertyEditor instances to know how to

convert properties from one format to another. However, most users of

Spring will not be dealing with these classes directly

(that is programmatically), but rather with an XML definition file which

will be converted internally into instances of these classes, and used

to load an entire Spring IoC container instance.

Bean dependency resolution generally happens as follows:

The

BeanFactoryis created and initialized with a configuration which describes all the beans. (Most Spring users use aBeanFactoryorApplicationContextimplementation that supports XML format configuration files.)Each bean has dependencies expressed in the form of properties, constructor arguments, or arguments to the static-factory method when that is used instead of a normal constructor. These dependencies will be provided to the bean, when the bean is actually created.

Each property or constructor argument is either an actual definition of the value to set, or a reference to another bean in the container.

Each property or constructor argument which is a value must be able to be converted from whatever format it was specified in, to the actual type of that property or constructor argument. By default Spring can convert a value supplied in string format to all built-in types, such as

int,long,String,boolean, etc.

The Spring container validates the configuration of each bean as the container

is created, including the validation that properties which are bean references

are actually referring to valid beans. However, the bean properties themselves

are not set until the bean is actually created. For those

beans that are singleton-scoped and set to be pre-instantiated (such as

singleton beans in an ApplicationContext),

creation happens at the time that the container is created, but otherwise this is

only when the bean is requested. When a bean actually has to be created, this will

potentially cause a graph of other beans to be created, as its dependencies and its

dependencies' dependencies (and so on) are created and assigned.

You can generally trust Spring to do the right thing. It will detect

mis-configuration issues, such as references to non-existent beans and

circular dependencies, at container load-time. It will actually set

properties and resolve dependencies as late as possible, which is when

the bean is actually created. This means that

a Spring container which has loaded correctly can later generate an

exception when you request a bean if there is a problem creating that

bean or one of its dependencies. This could happen if the bean throws

an exception as a result of a missing or invalid property, for example.

This potentially delayed visibility of some configuration issues is why

ApplicationContext implementations by default

pre-instantiate singleton beans. At the cost of some upfront time

and memory to create these beans before they are actually needed,

you find out about configuration issues when the

ApplicationContext is created, not later.

If you wish, you can still override this default behavior and set any of these

singleton beans to lazy-initialize (that is not be pre-instantiated).

Finally, if it is not immediately apparent, it is worth mentioning that when one or more collaborating beans are being injected into a dependent bean, each collaborating bean is totally configured prior to being passed (via one of the DI flavors) to the dependent bean. This means that if bean A has a dependency on bean B, the Spring IoC container will totally configure bean B prior to invoking the setter method on bean A; you can read 'totally configure' to mean that the bean will be instantiated (if not a pre-instantiated singleton), all of its dependencies will be set, and the relevant lifecycle methods (such as a configured init method or the IntializingBean callback method) will all be invoked.

First, an example of using XML-based configuration metadata for setter-based DI. Find below a small part of a Spring XML configuration file specifying some bean definitions.

<bean id="exampleBean" class="examples.ExampleBean">

<!-- setter injection using the nested <ref/> element -->

<property name="beanOne"><ref bean="anotherExampleBean"/></property>

<!-- setter injection using the neater 'ref' attribute -->

<property name="beanTwo" ref="yetAnotherBean"/>

<property name="integerProperty" value="1"/>

</bean>

<bean id="anotherExampleBean" class="examples.AnotherBean"/>

<bean id="yetAnotherBean" class="examples.YetAnotherBean"/>public class ExampleBean {

private AnotherBean beanOne;

private YetAnotherBean beanTwo;

private int i;

public void setBeanOne(AnotherBean beanOne) {

this.beanOne = beanOne;

}

public void setBeanTwo(YetAnotherBean beanTwo) {

this.beanTwo = beanTwo;

}

public void setIntegerProperty(int i) {

this.i = i;

}

}As you can see, setters have been declared to match against the properties specified in the XML file.

Now, an example of using constructor-based DI. Find below a snippet from an XML configuration that specifies constructor arguments, and the corresponding Java class.

<bean id="exampleBean" class="examples.ExampleBean">

<!-- constructor injection using the nested <ref/> element -->

<constructor-arg><ref bean="anotherExampleBean"/></constructor-arg>

<!-- constructor injection using the neater 'ref' attribute -->

<constructor-arg ref="yetAnotherBean"/>

<constructor-arg type="int" value="1"/>

</bean>

<bean id="anotherExampleBean" class="examples.AnotherBean"/>

<bean id="yetAnotherBean" class="examples.YetAnotherBean"/>public class ExampleBean {

private AnotherBean beanOne;

private YetAnotherBean beanTwo;

private int i;

public ExampleBean(

AnotherBean anotherBean, YetAnotherBean yetAnotherBean, int i) {

this.beanOne = anotherBean;

this.beanTwo = yetAnotherBean;

this.i = i;

}

}

As you can see, the constructor arguments specified in the

bean definition will be used to pass in as arguments to the constructor

of the ExampleBean.

Now consider a variant of this where instead of using a

constructor, Spring is told to call a static factory

method to return an instance of the object:

<bean id="exampleBean" class="examples.ExampleBean"

factory-method="createInstance">

<constructor-arg ref="anotherExampleBean"/>

<constructor-arg ref="yetAnotherBean"/>

<constructor-arg value="1"/>

</bean>

<bean id="anotherExampleBean" class="examples.AnotherBean"/>

<bean id="yetAnotherBean" class="examples.YetAnotherBean"/>public class ExampleBean {

// a private constructor

private ExampleBean(...) {

...

}

// a static factory method; the arguments to this method can be

// considered the dependencies of the bean that is returned,

// regardless of how those arguments are actually used.

public static ExampleBean createInstance (

AnotherBean anotherBean, YetAnotherBean yetAnotherBean, int i) {

ExampleBean eb = new ExampleBean (...);

// some other operations...

return eb;

}

}

Note that arguments to the static factory method

are supplied via constructor-arg elements, exactly

the same as if a constructor had actually been used. Also, it is

important to realize that the type of the class being returned by

the factory method does not have to be of the same type as the class

which contains the static factory method, although

in this example it is. An instance (non-static) factory method would

be used in an essentially identical fashion (aside from the use of the

factory-bean attribute instead of the

class attribute), so details will not be discussed here.

Constructor argument resolution matching occurs using the argument's type. If there is no potential for ambiguity in the constructor arguments of a bean definition, then the order in which the constructor arguments are defined in a bean definition is the order in which those arguments will be supplied to the appropriate constructor when it is being instantiated. Consider the following class:

package x.y;

public class Foo {

public Foo(Bar bar, Baz baz) {

// ...

}

}

There is no potential for ambiguity here (assuming of course that Bar

and Baz classes are not related in an inheritance hierarchy).

Thus the following configuration will work just fine, and you do not need to

specify the constructor argument indexes and / or types explicitly.

<beans>

<bean name="foo" class="x.y.Foo">

<constructor-arg>

<bean class="x.y.Bar"/>

</constructor-arg>

<constructor-arg>

<bean class="x.y.Baz"/>

</constructor-arg>

</bean>

</beans>

When another bean is referenced, the type is known, and

matching can occur (as was the case with the preceding example).

When a simple type is used, such as

<value>true<value>, Spring cannot

determine the type of the value, and so cannot match by type without

help. Consider the following class:

package examples;

public class ExampleBean {

// No. of years to the calculate the Ultimate Answer

private int years;

// The Answer to Life, the Universe, and Everything

private String ultimateAnswer;

public ExampleBean(int years, String ultimateAnswer) {

this.years = years;

this.ultimateAnswer = ultimateAnswer;

}

}The above scenario can use type matching

with simple types by explicitly specifying the type of the constructor

argument using the 'type' attribute. For example:

<bean id="exampleBean" class="examples.ExampleBean"> <constructor-arg type="int" value="7500000"/> <constructor-arg type="java.lang.String" value="42"/> </bean>

Constructor arguments can have their index specified explicitly by use of

the index attribute. For example:

<bean id="exampleBean" class="examples.ExampleBean"> <constructor-arg index="0" value="7500000"/> <constructor-arg index="1" value="42"/> </bean>

As well as solving the ambiguity problem of multiple simple values, specifying an index also solves the problem of ambiguity where a constructor may have two arguments of the same type. Note that the index is 0 based.

As mentioned in the previous section, bean properties and

constructor arguments can be defined as either references to other

managed beans (collaborators), or values defined inline. Spring's XML-based

configuration metadata supports a number of sub-element types

within its <property/> and

<constructor-arg/> elements for just this purpose.

The <value/> element specifies a property or

constructor argument as a human-readable string representation.

As mentioned previously,

JavaBeans PropertyEditors are used to convert these

string values from a String to the actual type of the

property or argument.

<bean id="myDataSource" class="org.apache.commons.dbcp.BasicDataSource" destroy-method="close">

<!-- results in a setDriverClassName(String) call -->

<property name="driverClassName">

<value>com.mysql.jdbc.Driver</value>

</property>

<property name="url">

<value>jdbc:mysql://localhost:3306/mydb</value>

</property>

<property name="username">

<value>root</value>

</property>

<property name="password">

<value>masterkaoli</value>

</property>

</bean>The <property/> and <constructor-arg/>

elements also support the use of the 'value' attribute, which can lead

to much more succinct configuration. When using the 'value' attribute,

the above bean definition reads like so:

<bean id="myDataSource" class="org.apache.commons.dbcp.BasicDataSource" destroy-method="close">

<!-- results in a setDriverClassName(String) call -->

<property name="driverClassName" value="com.mysql.jdbc.Driver"/>

<property name="url" value="jdbc:mysql://localhost:3306/mydb"/>

<property name="username" value="root"/>

<property name="password" value="masterkaoli"/>

</bean>The Spring team generally prefer the attribute style over the use of nested

<value/> elements. If you are reading this reference manual

straight through from top to bottom (wow!) then we are getting slightly ahead of ourselves here,

but you can also configure a java.util.Properties instance like so:

<bean id="mappings" class="org.springframework.beans.factory.config.PropertyPlaceholderConfigurer">

<!-- typed as a java.util.Properties -->

<property name="properties">

<value>

jdbc.driver.className=com.mysql.jdbc.Driver

jdbc.url=jdbc:mysql://localhost:3306/mydb

</value>

</property>

</bean>Can you see what is happening? The Spring container is converting the text inside

the <value/> element into a java.util.Properties

instance using the JavaBeans PropertEditor mechanism.

This is a nice shortcut, and is one of a few places where the Spring team do favor the

use of the nested <value/> element over the 'value'

attribute style.

The idref element is simply an error-proof way to

pass the id of another bean in the container (to

a <constructor-arg/> or <property/>

element).

<bean id="theTargetBean" class="..."/>

<bean id="theClientBean" class="...">

<property name="targetName">

<idref bean="theTargetBean" />

</property>

</bean>The above bean definition snippet is exactly equivalent (at runtime) to the following snippet:

<bean id="theTargetBean" class="..."/>

<bean id="client" class="...">

<property name="targetName">

<value>theTargetBean</value>

</property>

</bean>The main reason the first form is preferable to

the second is that using the idref tag allows the

container to validate at deployment time that the

referenced, named bean actually exists. In the second variation,

no validation is performed on the value that is passed to the

'targetName' property of the 'client'

bean. Any typo will only be discovered (with most likely fatal results)

when the 'client' bean is actually instantiated.

If the 'client' bean is a

prototype bean, this typo

(and the resulting exception) may only be discovered long after the

container is actually deployed.

Additionally, if the bean being referred to is in the same XML unit, and

the bean name is the bean id, the 'local'

attribute may be used, which allows the XML parser itself to validate the bean

id even earlier, at XML document parse time.

<property name="targetName">

<!-- a bean with an id of 'theTargetBean' must exist,

otherwise an XML exception will be thrown -->

<idref local="theTargetBean"/>

</property>By way of an example, one common place (at least in pre-Spring 2.0

configuration) where the <idref/> element brings value is in the

configuration of AOP interceptors in a

ProxyFactoryBean bean definition. If you use

<idref/> elements when specifying the interceptor names, there is

no chance of inadvertently misspelling an interceptor id.

The ref element is the final element allowed

inside a <constructor-arg/> or

<property/> definition element. It is used to

set the value of the specified property to be a reference to another

bean managed by the container (a collaborator). As

mentioned in a previous section, the referred-to bean is considered to

be a dependency of the bean who's property is being set, and will be

initialized on demand as needed (if it is a singleton bean it may have

already been initialized by the container) before the property is set.

All references are ultimately just a reference to another object, but

there are 3 variations on how the id/name of the other object may be

specified, which determines how scoping and validation is handled.

Specifying the target bean by using the bean

attribute of the <ref/> tag is the most general form,

and will allow creating a reference to any bean in the same

container (whether or not in the same XML file), or parent container.

The value of the 'bean' attribute may be the same as either the

'id' attribute of the target bean, or one of the

values in the 'name' attribute of the target bean.

<ref bean="someBean"/>

Specifying the target bean by using the local

attribute leverages the ability of the XML parser to validate XML id

references within the same file. The value of the

local attribute must be the same as the

id attribute of the target bean. The XML parser

will issue an error if no matching element is found in the same file.

As such, using the local variant is the best choice (in order to know

about errors are early as possible) if the target bean is in the same

XML file.

<ref local="someBean"/>

Specifying the target bean by using the 'parent'

attribute allows a reference to be created to a bean which is in a

parent container of the current container. The value of the

'parent' attribute may be the same as either the

'id' attribute of the target bean, or one of the

values in the 'name' attribute of the target bean,

and the target bean must be in a parent container to the current one.

The main use of this bean reference variant is when you have a hierarchy

of containers and you want to wrap an existing bean in a parent container

with some sort of proxy which will have the same name as the parent bean.

<!-- in the parent context --> <bean id="accountService" class="com.foo.SimpleAccountService"> <!-- insert dependencies as required as here --> </bean>

<!-- in the child (descendant) context -->

<bean id="accountService" <-- notice that the name of this bean is the same as the name of the 'parent' bean

class="org.springframework.aop.framework.ProxyFactoryBean">

<property name="target">

<ref parent="accountService"/> <-- notice how we refer to the parent bean

</property>

<!-- insert other configuration and dependencies as required as here -->

</bean>A <bean/> element inside the

<property/> or <constructor-arg/>

elements is used to define a so-called inner bean. An

inner bean definition does not need to have any id or name defined, and it is

best not to even specify any id or name value because the id or name value

simply will be ignored by the container.

<bean id="outer" class="..."> <!-- instead of using a reference to a target bean, simply define the target bean inline --> <property name="target"> <bean class="com.mycompany.Person"> <!-- this is the inner bean --> <property name="name" value="Fiona Apple"/> <property name="age" value="25"/> </bean> </property> </bean>

Note that in the specific case of inner beans, the 'scope'

flag and any 'id' or 'name' attribute are

effectively ignored. Inner beans are always anonymous and they

are always scoped as

prototypes. Please also note

that it is not possible to inject inner beans into

collaborating beans other than the enclosing bean.

The <list/>, <set/>,

<map/>, and <props/> elements

allow properties and arguments of the Java Collection

type List, Set,

Map, and Properties,

respectively, to be defined and set.